Blog

4 Strategies to Improve Participant Retention In Online Longitudinal Research Studies

Whenever researchers conduct a longitudinal research study, one concern takes precedence over almost all others: retaining participants. This is especially true for many studies conducted in the era of COVID-19, as researchers are interested in understanding how people adapt and cope to rapidly changing circumstances.

Fortunately, retaining participants in longitudinal research studies is easier with technology. In this article, we offer four sampling strategies to maximize retention in longitudinal studies conducted online. We also report data on the effectiveness of these sampling strategies in a large and demanding longitudinal study we conducted.

The tips in this article are drawn from a chapter in the newest book in SAGE’s Innovation in Methods Series, Conducting Online Research on Amazon Mechanical Turk and Beyond. This book, written by Leib Litman and Jonathan Robinson, provides both students and experienced researchers with essential information about the online platforms most often used for social science research.

How to Maximize Longitudinal Study Retention

1. Select the Appropriate Participants for Your Study

Convenience sampling is the most economical and uncomplicated way to source research participants. Having fewer barriers to participation can help a research project reach completion quicker. This explains the popularity of sites like Mechanical Turk (MTurk).

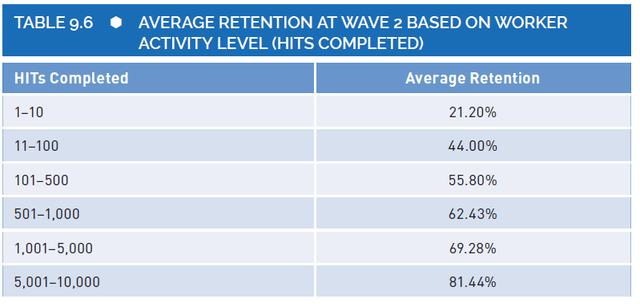

Yet, some participants spend more time on MTurk and complete more studies than others. The experience of these superworkers may pose a problem for some studies, but in other cases, their experience may be an advantage. Experienced survey takers are more likely to complete demanding tasks and more willing to participate in multiple waves of a longitudinal research study.

If the topic of your investigation is not compromised by having experienced research participants, consider sampling people with more experience to minimize attrition. While it is equally important to consider the possibility of sampling bias, the benefits of having participants properly complete various waves of the study can significantly contribute to project success. As shown in the figure below, participant retention increases linearly as experience on the platform increases.

2. Be Transparent about the Study and Payment

When recruiting participants for research, clear communication is imperative. Participants need to know exactly what they are agreeing to do at the start of the longitudinal study. If you want people to participate in five surveys over five months tell them this at the outset. Also, be clear about compensation. We recommend making details about what will be expected from participants and how they will be compensated as clear and transparent as possible before participants have officially enrolled in the study.

3. Increasing Payments or Awarding a Bonus

Participant recruitment is only one part of the success. When structuring the study compensating participants well is crucial to retaining participants in longitudinal research studies. There are multiple ways to incentivize participants across multiple waves of a longitudinal study. First, you could devise a payment schedule of increasing incentives to sustain participant motivation. If participants receive $1 for the first survey, they may receive $2 for the second survey and $3 for the third.

Another strategy is to keep absolute compensation the same, but shorten the length of each follow-up survey, thereby increasing the hourly rate.

Finally, a third strategy is to provide a large bonus for completing all waves of the study. Often, combining an increasing payment schedule with a bonus is the most effective strategy.

When running a longitudinal study, it is important to realize the demand you are making on people’s time. People are more likely to enroll in the study and to stick with it when the compensation for the initial survey is competitive and the payment increases for each subsequent survey. These sampling strategies aim to increase that retention rate.

4. Give Participants Reminders and Make Accessing the Study Easy

People on MTurk have busy lives and can understandably forget to complete a follow-up survey. Therefore, we recommend (a) making follow-up surveys incredibly easy to access and (b) providing people with friendly reminders about the need to participate.

To further ensure maximum retention, consider contacting participants before the launch of a follow-up survey, upon the launch of that follow-up survey, and once more on the final day of each survey’s availability to remind them of the survey’s impending expiration. Within the CloudResearch MTurk Toolkit you can schedule these email notifications in advance and automate the process of sending them.

In addition to the factors above, some sampling strategies originally identified for maximizing retention in offline longitudinal research studies may be effective for online studies. For example, a project logo and project identity can help participants feel they are part of the project and increase retention (Ribisl et al., 1996). Regardless of the sampling methods in research, thoughtful planning to minimize participation difficulty will always increase the likelihood of study success.

What is a Good Retention Rate for a Longitudinal Research Study?

More than a year ago, we managed a large longitudinal research study for a client. The longitudinal study required more than 2,500 people to participate in a two to three-hour forecasting task each week for 16 weeks. Using all the sampling strategies described above, we had a total of 2,785 people complete at least one session. More importantly, 63% of people completed 15 of 16 weeks and 60% of people completed all sixteen weeks of the study!

This level of retention would be nearly unimaginable for a face-to-face study given the demands of the study schedule. When studies are not this demanding, we regularly see retention rates near 70 to 80%.

For more information on running longitudinal research studies online and sampling strategies, including case studies of successful projects, see Chapter 9 on longitudinal studies in the book Conducting Online Research on Amazon Mechanical Turk and Beyond.

References

Hall, M. P., Lewis, Jr., N. A., Chandler, J., & Litman, L. (2020). Conducting longitudinal research on Amazon Mechanical Turk. In L. Litman & J. Robinson (Eds.), Conducting online research on Amazon Mechanical Turk and beyond (234-263). Sage Academic Publishing. Thousand Oaks: CA

Ribisl, K. M., Walton, M. A., Mowbray, C. T., Luke, D. A., Davidson, W. S., & Bootsmiller, B. J. (1996). Minimizing participant attrition in panel studies through the use of effective retention and tracking strategies: Review and recommendations. Evaluation and Program Planning, 19(1), 1–25. doi:10.1016/0149- 7189(95)00037-2

Related Articles

Best Practices That Can Affect Data Quality on MTurk

Amazon's MTurk has given researchers a powerful tool for connecting to survey respondents online. See how six simple best practices can help improve your data quality....

Read More

Pay Justice in a Newly Developing Economy

In 2020, the Covid-19 pandemic initiated an unprecedented shift in the American workforce. In a matter of weeks a significant portion of the labor force shifted to working online, a...

Read More