Blog

Examples of Good (and Bad) Attention Check Questions in Surveys

By Cheskie Rosenzweig, MS, Josef Edelman, MS & Aaron Moss, PhD

Why Are Attention Checks Used in Research?

Attention check questions are frequently used by researchers to measure data quality. The goal of such checks is to differentiate between people who provide high-quality responses and those who provide low-quality or unreliable data.

Although attention checks are an important way to measure data quality, they are only one of several data points researchers should use to isolate and quarantine bad data. Others include things like measuring inconsistency in responses, nonsense open-ended data, and extreme speeding.

In this blog, we describe what makes a good attention check and provide examples of good and bad attention check questions.

What Makes a Good Attention Check?

A good attention check question has a few core components:

It measures attention, not other constructs related to memory, education, or cultural specific knowledge

It has a clear, correct answer

It is not overly stringent or too easy

It doesn’t require participants to violate norms of human behavior

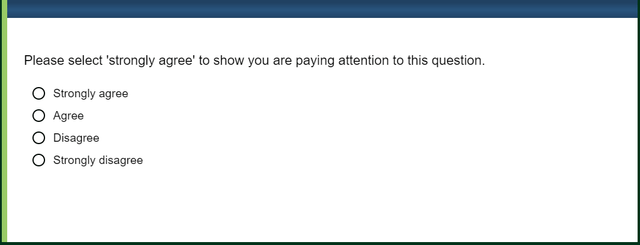

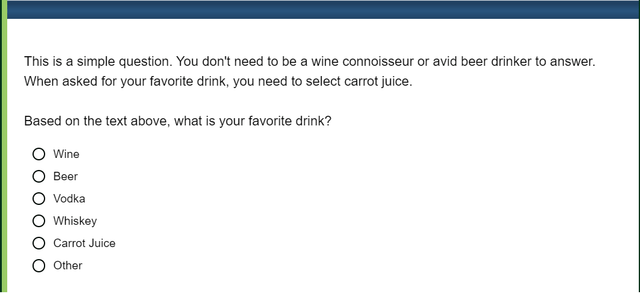

The example below contains all of these elements.

Common Mistakes Researchers Make When Writing Attention Checks

Attention check questions can be subpar for many reasons, but most often when a question does not function as it should it’s because one of the core components above has been violated.

Attention Checks That Measure Something Other Than Attention

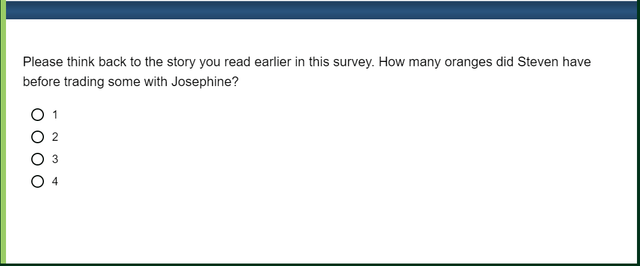

Researchers sometimes adopt attention check questions that ask participants to think back to an earlier part of the survey and recall specific information. This kind of question certainly has something to do with attention, but it also measures memory. And often the difficulty of the recall required is unclear, as in the example below.

Even though this question has a clear and factual answer it is unclear what to conclude about someone who gets the question wrong. Did the person fail because they were not paying attention or because they forgot the story’s details? Were the number of oranges a critical piece of the story or a minor detail that people may have understandably failed to encode?

Rather than asking attention check questions that rely on memory, we recommend asking questions that check for comprehension just after critical materials are presented within the study. Then, questions that assess attention can be used solely to assess attention, regardless of where in the study they are presented.

Attention Checks Can be Unclear

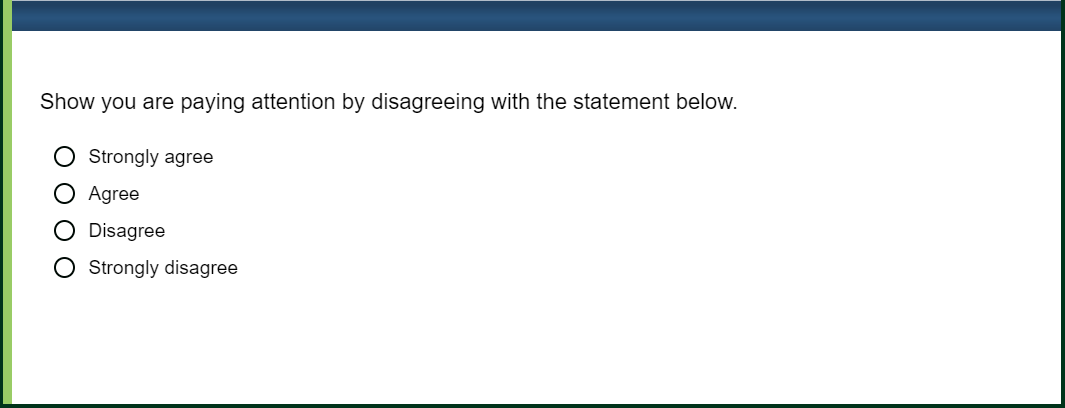

Another reason attention check questions sometimes fail is because they lack a clear and correct answer. At times, this is because the question’s answer options are ambiguous.

Even though this question appears straightforward and is similar to the example of a good attention check item given above, it actually contains some ambiguity. Some participants may decide to indicate their attention by selecting strongly disagree rather than just disagree. Are these people being inattentive?

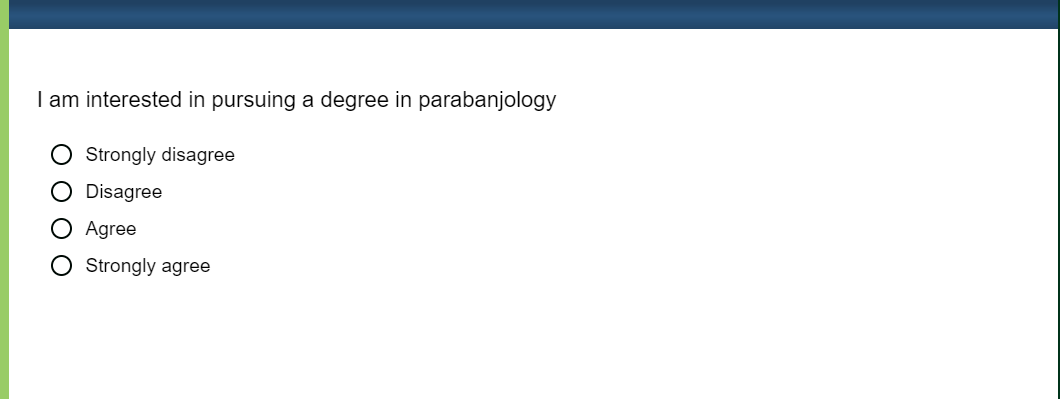

At other times, questions can be unclear because participants interpret things differently than researchers intended. Here is an example:

And another:

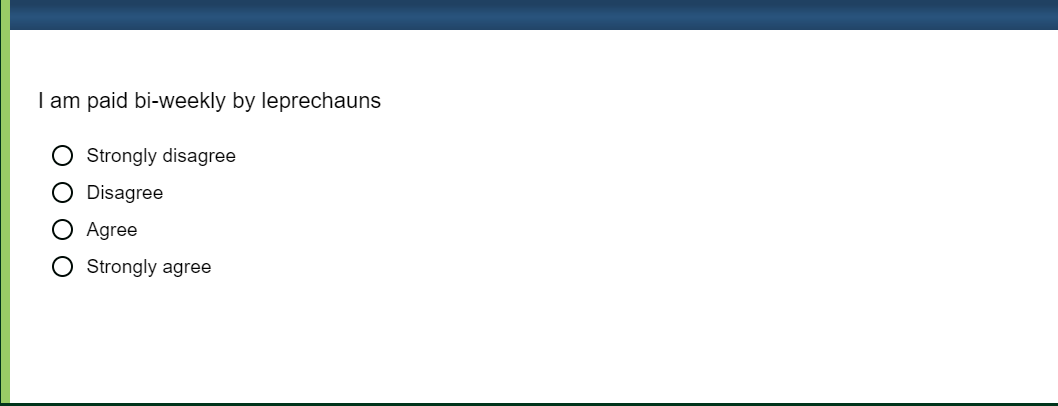

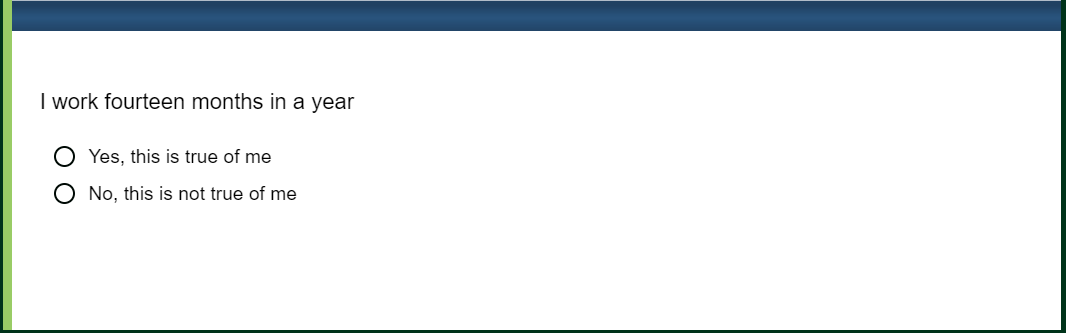

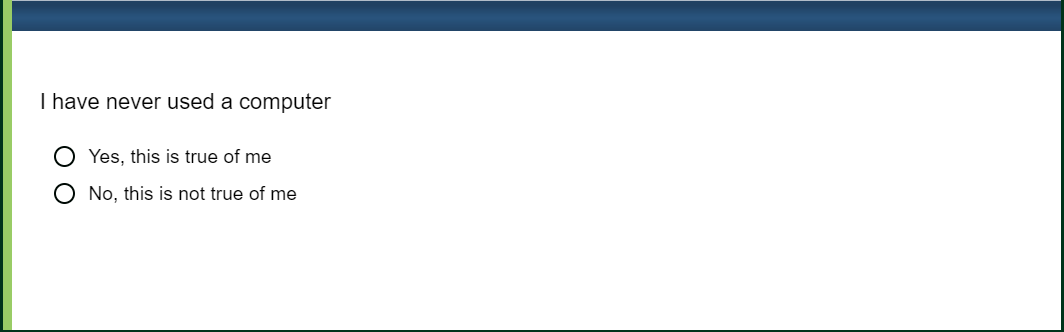

In both questions above, it appears there is little room for ambiguity or error. Nevertheless, research shows that attentive participants can sometimes ‘fail’ these items because they interpret them differently than researchers intend (Curran & Hauser, 2019). For instance, participants asked to explain why they agreed with the statements above said things like even though they were not sure what parabanjology is, it sounds interesting, and they did not necessarily want to rule it out. In response to the second statement, participants may agree that they are paid bi-weekly, just not by leprechauns.

Instead of the items above, we recommend asking participants to “strongly agree” or “strongly disagree” with items, or asking questions that are short, simple, and hard to reason around.

Attention Checks Vary in Difficulty and Can Require Participants to Violate Behavioral Norms

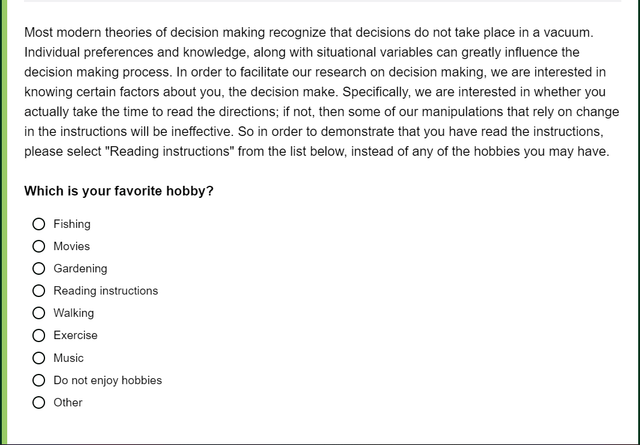

Sometimes an attention check isn’t overly difficult because of the content it is asking participants to provide or recall, but because it is designed to only be answerable by participants who are highly attentive to every minute detail or who follow every single word in a survey. Questions like these end up excluding participants who are otherwise high-quality respondents, but are not extraordinarily attentive and conscientious:

Attention checks shouldn’t require participants to ignore direct questions that appear straightforward. Instead, questions like this should reference that the instructions are important and ensure that the response participants are expected to give is clear, like the example below.

The above examples are reasons why we recommend that researchers use attention check questions that have been previously tested and validated as good checks of attention. When researchers create attention checks based on personal intuition, the checks they use often lack validity (see Berinsky, Margolis, & Sances, 2014).

Automate Attention Checks and Ensure Better Data With Sentry® By CloudResearch

Rooted in these best practices in developing attention checks and other measurements of data quality, CloudResearch has undertaken several large scale initiatives aimed at improving data quality in online surveys. We have cleaned up data on one platform often used for online research: Mechanical Turk. We also have data quality solutions that can be applied to any and all sample sources: our Sentry system. Sentry is an all encompassing data quality solution that ensures top notch respondents through multiple behavioral and technological analyses, including attention checks. Learn more about Sentry today!

Related Articles

New Solutions Dramatically Improve Research Data Quality on MTurk

New solutions from CloudResearch help researchers obtain high-quality data on MTurk while blocking inattentive and otherwise low-quality respondents from participating....

Read More

Clean Data: the Key to Accurate Conclusions

Learn why survey data cleaning - separating high-quality from low-quality responses - is important in order for researchers to draw accurate conclusions from data....

Read More