Blog

Connect by CloudResearch: Advancing Online Participant Recruitment in the Digital Age

We launched Connect in the summer of 2022, and in the past year it has made big waves in the world of online research. There are already over 250 papers that used Connect, with a mix of publications, preprints, theses, and dissertations, and we’re expecting even more growth in the upcoming year.

While there are plenty of papers demonstrating that high-quality data can be collected effortlessly and affordably, we decided to write a white paper to demonstrate the underlying mechanics and principles that drive Connect’s success. Our white paper delves into the comprehensive design, innovation, and commitment that have shaped Connect. We aim to shed light on how and why Connect has quickly become a cornerstone in the online research community. By sharing these insights, we hope to foster a deeper understanding and appreciation for not just the platform’s capabilities, but also the dedication and vision that propels it forward. Whether you’re a seasoned researcher or are just starting out, this paper provides a valuable glimpse into the heart of modern online participant recruitment.

Below, we highlight some of the main points in the paper. You can read the full paper here, and cite it as:

Hartman, R., Moss, A. J., Jaffe, S. N., Rosenzweig, C., Litman, L., & Robinson, J. (2023, September 15). Introducing Connect by CloudResearch: Advancing Online Participant Recruitment in the Digital Age. Retrieved from psyarxiv.com/ksgyr

Connect Features for Facilitating Responsible Research

Connect provides a comprehensive suite of features tailored for advanced research needs. It facilitates collaboration and fund-sharing between labs, enables study quota settings, allows for instant text notifications, and offers researcher ratings and an integrated communication system. Additionally, Connect is adept at managing complex study designs through survey integration, longitudinal study support, bonusing options, and a versatile API. These capabilities streamline the execution of intricate research protocols.

The Participant Experience

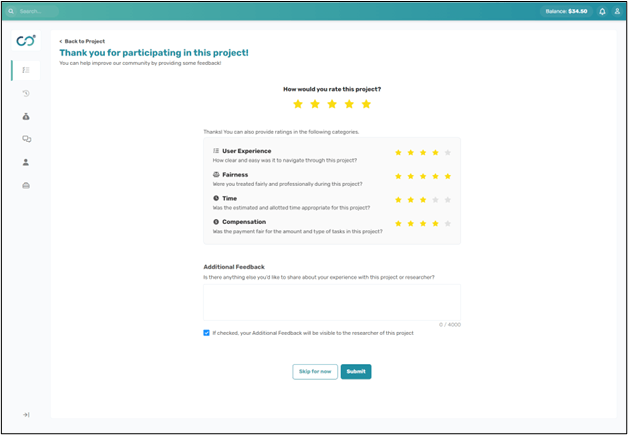

Connect is designed with a primary focus on research participants, recognizing them as integral partners in the research process. We’ve learned from other platforms’ limitations and introduced several features to enhance the participant experience. These include a Connect subreddit for community interaction, a feedback system allowing participants to rate researchers, an integrated messaging system safeguarding participant anonymity, real-time study feedback options, easy reporting mechanisms for unfair researcher practices, and established compensation benchmarks for projects.

The expanded rating system allows participants to rate the user experience, fairness of the project, accuracy of the time estimate, and quality of the compensation. All ratings contribute to a researcher’s reputation.

Collaborative Science

Modern science is a collective endeavor. Instead of solitary scientists, research now involves diverse teams from undergraduates to experts. These teams not only collaborate within their labs but also connect globally, turning science into a joint global initiative. Yet, collaboration often hits snags. Existing platforms struggle to support cohesive research teamwork, leading some to use joint accounts, risking security and muddling accountability, while others grapple with convoluted logistics for shared projects and financing.

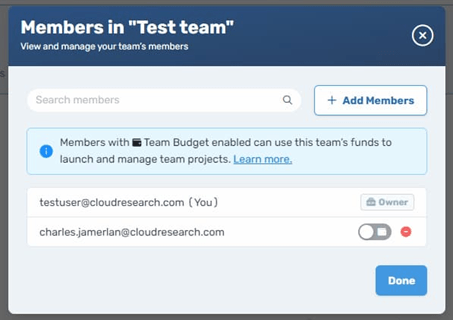

Enter Connect Teams. Connect Teams lets researchers collaboratively manage projects from individual accounts. Its user-friendly interface effortlessly switches to Teams mode, where you can create, oversee, and engage with your team and projects. Plus, pooling resources and sharing funds becomes straightforward without risking personal credentials. Lab managers can efficiently fund their teams, ensuring security and accountability throughout.

Connect Teams allows you to share projects with others, and use a shared wallet.

Demographic Targeting

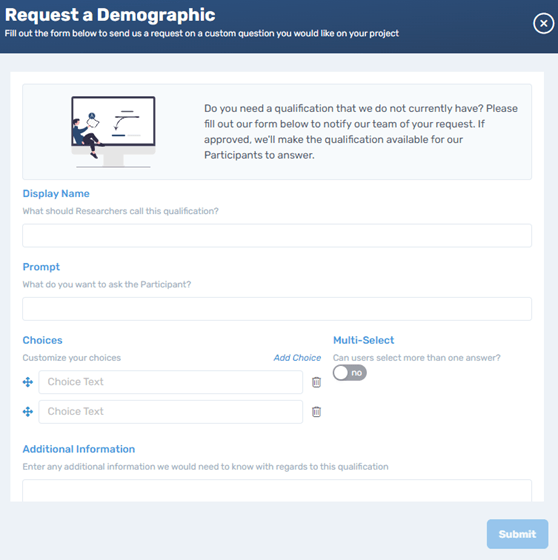

Connect boasts over 120 qualifying questions for participant targeting, encompassing basic demographics like age, race, and income, as well as niche criteria like podcast listenership, programming proficiency, gun ownership, and cryptocurrency investments. And if you’re seeking a demographic we haven’t yet covered? Simply log in, create a project, choose “Demographic Targeting,” and on the subsequent page, click “Request a Demographic.” After filling out the prompts, our team will review and add your desired demographic, making it available for targeting within days.

Connect allows you to request any demographic or targeting criteria you want by simply filling out a short form.

Census Matching

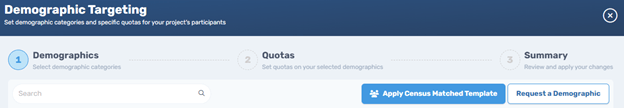

Ensuring a study’s participants represent a broad demographic spectrum enhances its reliability and replicability. To facilitate this, Connect offers an effortless, free census-matching feature. With just a click, researchers can bolster the authenticity and applicability of their studies.

Census matching with a click of a button on Connect

Setting Quotas

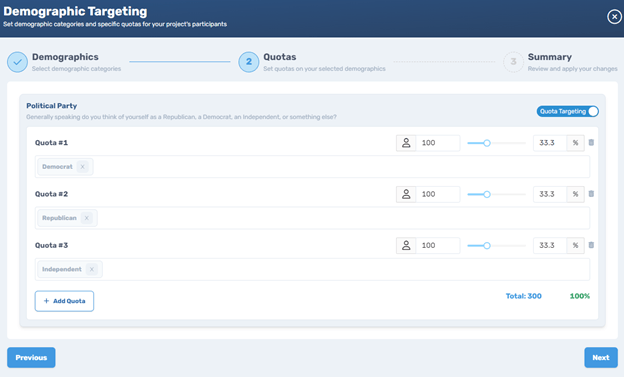

Acquiring a diverse sample can be challenging. For instance, if you aim to survey Democrats, Republicans, and Independents, other platforms might require three distinct studies, which is both tedious and prone to errors. Connect simplifies this with its intuitive quota system. Simply pick the desired demographics (like political affiliation) and proceed to activate the “Quota Targeting” feature. You can then specify which categories you want and determine the percentage of participants for each. Connect smartly prioritizes harder-to-reach demographics for more efficient data collection.

Setting quotas on Connect is quick and easy, with no need to create separate surveys for different groups.

Validated Scales

Researchers frequently need to segment participants not just by demographics but by psychological profiles, or what’s termed as psychographic characteristics. These traits often can’t be gauged with a single question and require validated multi-item tools for precise measurement.

Connect offers a pioneering system to recruit participants using these validated scales. This includes both well-established and custom scales that researchers can introduce. We periodically gather scale data from participants, ensuring this information is kept distinct from individual studies.

For instance, researchers can pinpoint participants using specific instruments like the PHQ-9 (for depression), the EDE-QS (for eating disorders), or the GAD-7 (for general anxiety). What’s more, they can specify desired scores on these scales, such as seeking participants with varying levels of anxiety.

The possibilities are expansive: personality psychologists can segment by the Big Five Inventory, health experts can evaluate physical activity or eating habits with tools like the IPAQ or FFQ, educators can delve into student motivations using Learning Strategies Scales, and mental health professionals can utilize the GAD-7 or the PSS to understand anxiety or stress levels. While many of these scales are already available on Connect, we’re consistently expanding based on user needs.

Researchers can target any point along the scale and set quotas for each targeted group.

Complex Studies

Modern research frequently demands innovative and intricate study designs that go beyond the standard cross-sectional survey methodology. To successfully conduct these studies, researchers often need greater access to participants and real-time notifications, communication, and data collection. Therefore, we have designed Connect with these dynamic needs in mind, offering a host of features designed to streamline the implementation of intricate research protocols and complex studies. These include the ability to integrate with any survey platform for seamless data collection, text notifications to support real-time data collection, support for longitudinal studies and experience sampling, including participant groups and preprogrammed launch times, bonusing options, and an API for more flexibility.

Data Quality and Fraud Prevention

Online research has opened many doors, but it’s also brought some challenges, especially when it comes to data reliability. Recent studies have found that between 30% to 40% of data from online platforms might not be trustworthy (Berry et al., 2022; Chandler et al., 2019; Litman et al, 2023). This is a problem for both corporate research, which loses billions of dollars on misinformed marketing campaigns, and for research in the social and behavioral sciences. Good research relies on accurate data, and inaccurate information from disengaged, inattentive, and fraudulent respondents creates illusory effects and slows down progress (Dixit & Bai, 2023; Litman, 2023). To protect data quality, Connect thoroughly and continuously vets participants.

Participant Screening

On Connect, data quality is safeguarded through rigorous technical and behavioral checks right from the participant sign-up phase. On the technical side, we check IP addresses, bank or PayPal details, and device usage. This means no participant can have multiple accounts, and no device is used for multiple accounts on the same project. On the behavioral side, participants undergo an assessment via our Sentry system which evaluates their attention, honesty, language comprehension, and adherence to instructions. The system actively detects behaviors such as unauthorized translation, copied answers, and automation. Furthermore, open-ended responses are meticulously assessed using both human judgment and automation. By combining these methods, we block thousands of fraudulent or low-quality participants from joining Connect each month, and ensure that only eligible participants are able to complete studies.

Ongoing Monitoring

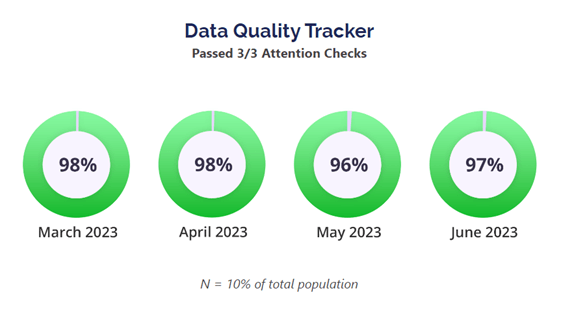

After participants join Connect, we remain dedicated to maintaining data quality. We conduct regular surveys to examine participant data quality and monitor the platform’s overall quality each month. If we spot any questionable data, we delve deeper to decide if the participant should remain on Connect. Additionally, we watch for unusual financial activities, returning blocked accounts, and other potential red flags.

Every month, we sample around 10% of Connect participants, running surveys with attention check questions. Since initiating these surveys in November 2022, an impressive average of 98.1% of participants passed all attention checks.

Researchers also play a pivotal role in maintaining data quality. They have tools to reject participants delivering low-quality data, helping both in cost-saving and platform integrity. Participants with recurring rejections undergo further examination. Researchers can also “flag” participants, indicating potential quality concerns without withholding their pay. Continued flags or declining data quality may lead to participant removal. Moreover, researchers can exclude participants from all future studies using the Universal Exclude list. If a participant is frequently excluded by different researchers, we consider their removal from the platform.

Conclusion

The evolution of behavioral science research highlights the increasing demand for efficient and cost-effective online participant recruitment platforms. CloudResearch’s Connect platform is primed to meet this demand, offering an array of features tailored to researchers’ diverse needs. Connect emphasizes both unparalleled data quality through meticulous screening and monitoring and the ethical treatment of participants. The platform enhances participant engagement by fostering a community, ensuring fair pay, and facilitating open communication. This dual focus on quality and ethics, alongside an array of advanced tools, makes Connect both user-friendly and efficient. Notably, despite its premium features, Connect maintains competitive pricing, making it an attractive choice for researchers prioritizing cost and quality.

Looking ahead, Connect has ambitious plans, including expansion to more English-speaking countries by 2023. The platform will be introducing improved messaging, streamlined management, and new tools for researchers, while participants can expect enhanced notifications, insights, and expanded access. Upcoming loyalty and referral programs are also on the horizon. These future initiatives underscore Connect’s ongoing commitment to innovation, user-focused design, and leadership in the research platform domain.

References

Berry, C., Kees, J., & Burton, S. (2022). Drivers of data quality in advertising research: Differences across MTurk and professional panel samples. Journal of Advertising, volume 51(4), 515-529. https://doi.org/10.1080/00913367.2022.2079026

Chandler, J., Rosenzweig, C., Moss, A. J., Robinson, J., & Litman, L. (2019). Online panels in social science research: Expanding sampling methods beyond Mechanical Turk. Behavior Research Methods, 51, 2022-2038. https://doi.org/10.3758/s13428-019-01273-7

Coppock, A., Leeper, T. J., & Mullinix, K. J. (2018). Generalizability of heterogeneous treatment effect estimates across samples. Proceedings of the National Academy of Sciences, 115(49), 12441-12446.

Dixit, N., & Bai, A. (2023, May 1). Businesses and investors are losing billions to fraudulent market research data. Here’s how to fix it. Nasdaq. https://www.nasdaq.com/articles/businesses-and-investors-are-losing-billions-to-fraudulent-market-research-data.-heres-how?amp

Lease, M., Hullman, J., Bigham, J., Bernstein, M., Kim, J., Lasecki, W., Bakhshi, S., Mitra, T., & Miller, R. (2013, March 6). Mechanical Turk is not anonymous. SSRN. https://ssrn.com/abstract=2228728 or https://dx.doi.org/10.2139/ssrn.2228728

Litman, L. (2023, July 14). Are businesses losing billions to market research fraud? https://www.cloudresearch.com/resources/blog/are-businesses-losing-billions-to-market-research-fraud/

Litman, L., Rosen, Z., Hartman, R., Rosenzweig, C., Weinberger-Litman, S. L., Moss, A. J., & Robinson, J. (2023). Did people really drink bleach to prevent COVID-19? A guide for protecting survey data against problematic respondents. PLOS One. https://doi.org/10.1371/journal.pone.0287837

Moss, A.J., Rosenzweig, C., Robinson, J. et al. Is it ethical to use Mechanical Turk for behavioral research? Relevant data from a representative survey of MTurk participants and wages. Behav Res (2023). https://doi.org/10.3758/s13428-022-02005-0

Mullinix, K., Leeper, T., Druckman, J., & Freese, J. (2015). The Generalizability of Survey Experiments. Journal of Experimental Political Science, 2(2), 109-138. doi:10.1017/XPS.2015.19

Related Articles

Connect by CloudResearch: A New and Versatile Platform for Online Recruitment

CloudResearch is thrilled to introduce Connect – our new platform for online research! Connect is dedicated to delivering high-quality data, making collaboration among researchers easy, and pushing the boundaries of...

Read More

What is CloudResearch? A Rundown of CloudResearch's Products and the Best Use-Case for Each One

Learn more about CloudResearch's products, including the best use case for each. Contact us for a demo today!...

Read More