Blog

Age ISN'T Just a Number: Age Verification in Online Studies

Do you own a bathrobe? Do you save the packaging when you buy a new phone? Would drawing something on a dirty car window be just plain unthinkable? These are some of the questions in the popular party game “How Old Are You Really?”. The underlying premise of the game is simple—there are certain cultural practices and knowledge that people from different generations are more prone to do or know. The game is lots of fun, but could the same idea be used for science?

Using Cultural Knowledge to Verify Participant Age

Oftentimes, researchers want to target their surveys toward particular demographics: e.g., people of a certain race, gender, or age. If they state the criteria they’re looking for in the survey title, they face the risk of people lying about their demographics in order to qualify for the study, particularly if the study offers high compensation. While most people tend to be honest, if the incentive and opportunity are there, they will sometimes succumb to temptation. That’s where CloudResearch qualifications fit in, or the alternative 2-step screener. But these methods aren’t foolproof, and researchers might want the peace of mind of knowing the participants they’ve recruited aren’t imposters.

That’s where cultural knowledge fits in. Instead of relying on self-reporting, researchers can test their participants to see if their knowledge and experiences align with those of the demographic they’re claiming to be a part of. To demonstrate the utility of this approach, we ran a series of studies (preprint available here) focusing on age.

Age Verification Methods and Results

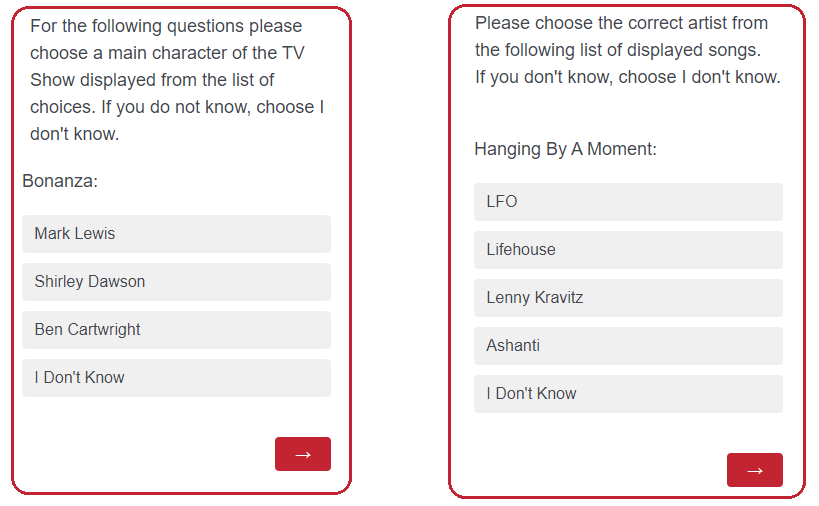

Specifically, after trying out different categories, we came up with 19 questions about songs and TV shows to test participants’ “cultural age.” Questions about older culture were about songs and TV shows that had been popular in the 60s-80s, while questions about younger culture focused on more recent decades.

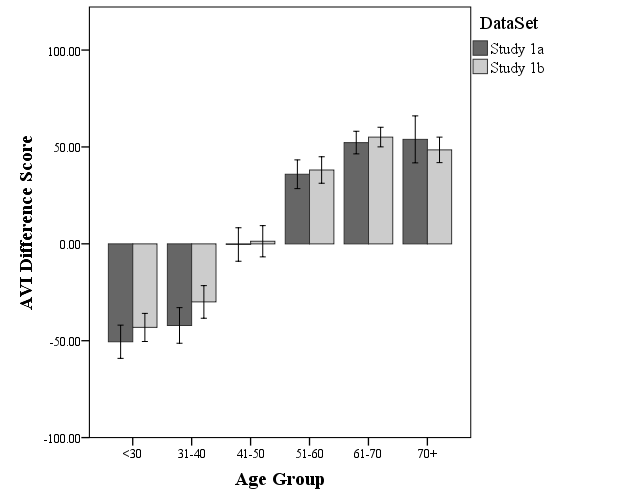

Once we had our instrument it was time to take it for a test drive. We gathered two samples—one from MTurk and one from Prime Panels—and in each sample we got data from about 50 participants in each decade of age (20s through 70s). Since we only cared about peoples’ relative knowledge of popular culture and not how much they know overall, we calculated a difference score by subtracting the percentage of correct responses each participant provided for the older and younger questions. Higher scores meant participants had relatively more “older” knowledge, while lower scores meant they knew relatively more about “younger” culture. We then used participants’ self-reported age to see how well they performed.

The results are striking. As is evident in the image below, participants over 50 clearly know much more about older culture, while those 40 and under clearly know more about younger culture. And the people who knew a little bit about both fell smack dab in the middle!

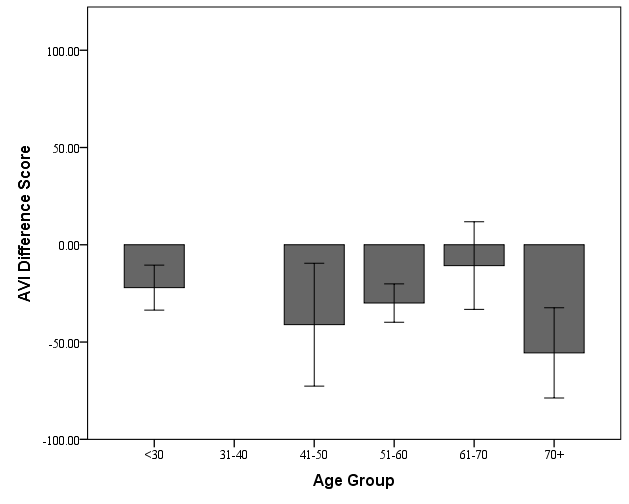

That’s all very well and good, but what about those imposters? When you take a car out for a test drive, you don’t just want to get that smooth sailing experience. You need to know how the car will handle sudden braking, sharp turns, and bumpy roads. To test our new measure, we tried to set it up for failure. In the first study, we were pretty confident participants were telling the truth about their age—the study wasn’t advertised for any particular age group, so they had no incentive to lie. In Study 2, we actively recruited liars. We advertised the study as being “ONLY for workers who are OVER 50,” but behind the scenes we set the qualification in a way that made the study visible only to workers who had previously told us they were 30 or younger.

Our participants were terrible liars! Most reported their age as over 50, but they knew nothing about older culture. Across the board, our participants looked like the youngsters they actually were, as opposed to the old timers they were impersonating.

This gave us assurance that our test could be a useful tool for catching imposters. Even if a participant claims to be 65 in order to qualify for a study, one could easily ask them some questions about popular songs and TV shows, and based on their answers have a pretty good idea about whether they’re telling the truth.

But does it work for everyone? I’ll never forget the first car I bought. I was living in Wisconsin at the time, and it was a bright sunny day in late spring. I did all the stress-testing I could, but nothing I did clued me in to the fact that RWD vehicles and snowy winters are not a good match. Come mid-December, I learned my lesson.

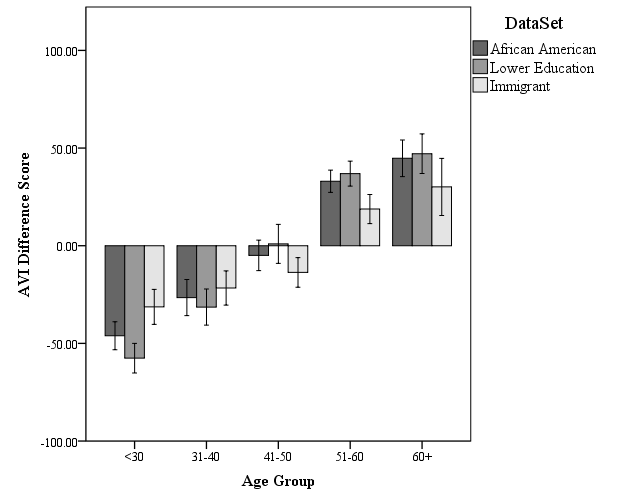

Rather than risking getting our proverbial wheels spinning helplessly in a ditch, we decided to take the age verification instrument out for a test in different conditions. Our “bad weather” test was to see how well it performed in different cultural groups, specifically African Americans, immigrants to the U.S., and people whose highest level of education completed was high school. We figured there was a chance it wouldn’t work as well with these groups, since they might have different cultural knowledge. What we found is that while the differences between younger and older participants weren’t quite as large as in Study 1, the instrument still did a fantastic job distinguishing between people of different ages:

With this final test, we were able to conclude that our instrument can reliably tell apart younger and older participants, even when dealing with imposters, and even among people of different cultural backgrounds.

Conclusion

We like the questions we came up with, but we’re even more excited about the general approach. When people try to recruit groups who have specialized knowledge, they need not rely solely on participant self-report. Instead, a better approach would be to ask some questions to determine whether the participants have the knowledge they should have if they truly belong to that group. With so many moving parts in online research, why take the chance that people in your sample may be imposters?

Related Articles

4 Strategies to Improve Participant Retention In Online Longitudinal Research Studies

Participant retention is a key factor in any longitudinal study, particularly for researchers operating online. Learn 4 key strategies to improve your retention rate....

Read More

Announcing our Innovations in Online Research Conference & $10K CloudResearch Grant Award!

We are thrilled to announce our Innovations in Online Research Conference and our snippet: >- 0,000 CloudResearch Grant Award!...

Read More