Determining Completion Rate and Dropout Rate on Mechanical Turk

What is the completion rate and dropout rate?

Dropout rate is defined as the percentage of participants who start taking a study but do not complete it. Dropout rate is sometimes referred to as attrition rate, and is the opposite of completion rate (dropout rate = 100 – completion rate). On MTurk, completion rate is defined as the number of Workers who submit a HIT divided by the number of Workers who accept the HIT. Note that, for the definition of completion rate used here, Rejected Workers are counted as completes.

Why is completion rate important?

Completion rate is an important indicator of data quality. A low completion rate indicates that there is a selection bias which may be influencing the representativeness of the results. A very high dropout rate may also mean that there is something wrong with the study. It is typically good practice to report completion rate in the method/results section of a paper. Indeed, some editors require authors to use the CHERRIES checklist for survey research (Eysenbach, 2004), which asks about a study’s completion rate.

How to determine the completion rate of a HIT.

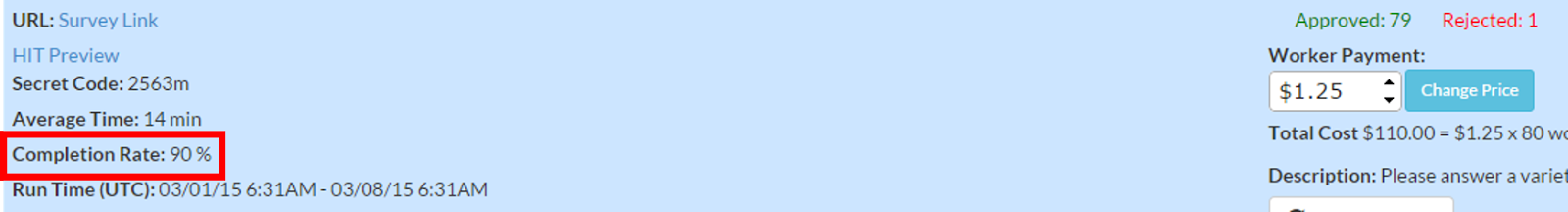

Mechanical Turk does not report a HIT’s completion rate, and researchers typically do not report completion rates for studies which use MTurk participants. In a review of 300 studies published in 2014, we found that less than 5% of papers using MTurk participants reported completion rates (paper in preparation). Because completion rate can be an important indicator of a study’s quality, we made completion rate available on TurkPrime, so that researchers can monitor completion rate in real time. The completion rate is visible on the expanded view of a HIT on TurkPrime’s Dashboard.

Why do Workers sometimes fail to complete HITs?

Workers who accept a HIT may not be able or willing to submit it for a number of reasons: 1) They may not have had time to complete the HIT, 2) They may not have been able to submit the HIT due to technical factors. For example, the survey page did not load, or the computer malfunctioned, 3) The Worker may have returned the HIT because they were not satisfied with the study or if they lost interest.

Why monitoring the completion rate may be important.

There are a number of reasons that a researcher may want to monitor completion rate in real time. For example, there may be something wrong with a HIT which will make Workers return the HIT, or not be able or willing to complete it. For example, Workers may not want to work on a HIT based on its description. This may occur after a Worker accepts a HIT and after reading the detailed instructions for the study. If the study instructions are systematically turning Workers off from taking the HIT, it will be very important for the researcher to know that so that they may either change the study or, if not, to be aware of that methodological limitation. If a HIT takes much longer to complete than is indicated in the study description Workers will also be more likely to return it. A requester may want to modify the pay rate if completion rate is low.